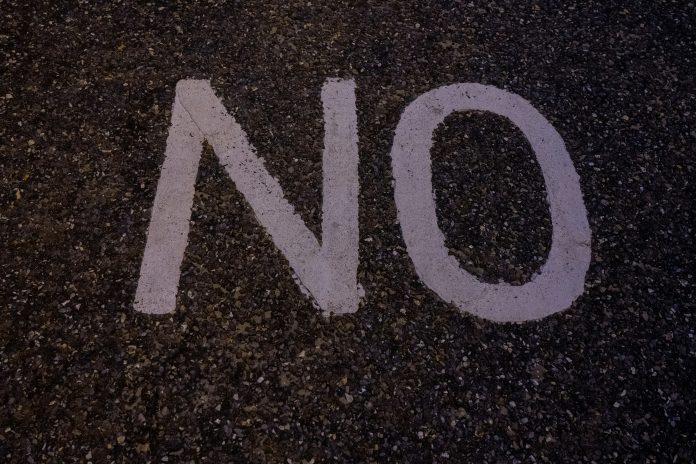

Building with generative AI throws up no shortage of technical hurdles — but according to one senior figure in the regulatory technology space, the greatest challenge has nothing to do with model selection, data quality, or infrastructure. It is the simple act of saying no.

That is the view of the head of innovation lab at Zeidler Group, who posed the question during a recent industry Q&A. Having spent considerable time at the forefront of generative AI adoption — overseeing pilots that have since matured into full commercial products — the executive has observed a telling shift in how organisations approach the technology. Questions that once focused on opportunity and competitive advantage are gradually giving way to harder, more nuanced enquiries about what generative AI can and cannot realistically deliver.

The current climate does little to make restraint easy. Generative AI continues to dominate the professional conversation, with a relentless stream of new tools, platforms, and use cases entering the market every week. The result is an atmosphere of near-unbridled optimism — one that the Zeidler Group executive compares to earlier waves of industry enthusiasm around big data, no-code development, and web3. In each of those cycles, the promise of transformation proved difficult to resist, and generative AI appears to be following a similar pattern.

Zeidler Group head of innovation lab said, “It’s reminiscent of the frenzies around big data, no-code, and web3 that captured industry’s consciousness, changing how firms operate and evolve with time.”

Against this backdrop, pushing back on a proposed project can feel deeply uncomfortable — a contrarian stance in a room full of believers. But it is precisely this discomfort, the executive argues, that makes the ability to say no such a valuable and underappreciated skill.

Determining when to decline a project is, however, far from straightforward. There is no formula or scoring system that reliably produces the right answer, they said. Instead, the decision demands careful judgement across three broad areas: the technological limitations of LLMs as they apply to the specific task at hand; the project’s requirements around accuracy, cost, and delivery timeframes; and the strategic rationale underpinning the initiative, they said. Even projects that look nearly identical at a high level can diverge sharply once these factors are examined in detail.

Consider the difference between using AI to review an early-stage document draft versus deploying it to sign off a final version before submission. Or the distinction between a human-in-the-loop workflow and one designed for full automation. Both pairings may sound similar in a boardroom conversation, yet they carry very different risk and feasibility profiles in practice. Getting to grips with these distinctions early is essential to making sound decisions about whether to proceed.

Equally important is recognising that a refusal is not always final, they said. The technology landscape shifts quickly, and a project that was rightly turned down a year ago may be entirely viable today. The Zeidler Group executive points to multimodal LLMs as a case in point: before their emergence, accuracy rates for graph and chart detection hovered around 50%, making a wide range of document-processing projects effectively unworkable. Those same projects are now being greenlighted. Revisiting past decisions in light of new capabilities is, therefore, a healthy and necessary discipline.

For more insights, read the full story here.

Copyright © 2026 FinTech Global